Introduction

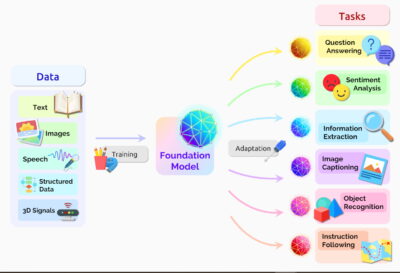

The field of artificial intelligence is witnessing a recent explosion in research, tool development as well as software system deployment. Many software development companies are shifting their focus on developing intelligent software systems by adopting AI to their existing processes. At the same time, the academic research community is injecting AI paradigms to provide robust solutions to traditional software engineering (SE) problems and has achieved promising results. Again, AI has been proved useful to address major challenges that the SE field has been facing. Indeed, different AI paradigms (such as neural networks, machine learning, knowledge-based systems, natural language processing) can be applied to different SE phases (i.e., requirement, design, development, testing, release and maintenance) to improve the process and reduce human-effort in tedious and cumbersome tasks (e.g., testing, debugging etc.).

Our research group focuses on studying how to apply AI to resolve current challenges of SE phases during software development lifecycle. We aim to leverage AI paradigms in improving the existing processes by representing intelligence and eventually automating some SE phases such as requirement engineering, testing and debugging. We are also focusing on applying software methodology and architecture in developing AI applications. See the slides here for more detail.

Contact: Dr. Bui Thi Mai Anh, Email: anhbtm@soict.hust.edu.vn

Research Directions

- Intelligent Requirement engineering and planning: Requirement and planning is the first stage in the SE process which allows to establish the building blocks of a software system. Software requirements describe the outlook of a software application by specifying the software’s main objectives and goals. Requirement Engineering is the engineering discipline of establishing user requirements and specifying software systems. Studies in this field aim to automatically extract requirement information from users (which is often described by natural languages) and to systematically represent software requirements (by using modeling languages or specification languages such as UML). Applying Natural Language Processing (NLP) and Machine Learning/Deep Learning are trending directions in this field. Aside from automated requirement engineering, to help project managers in effective planning and software cost estimation, the selection of software requirements and scheduling software development process are addressed by using multiobjective evolutionary algorithms (MOEAs).

- Automated Software refactoring: Refactoring is one of the most expensive parts of software implementation. Code refactoring is intended to improve the design, structure and/or implementation of software systems while preserving their functionality. Search-based methods (using metaheuristics) and machine learning approaches are combined to automatically suggest refactoring solutions.

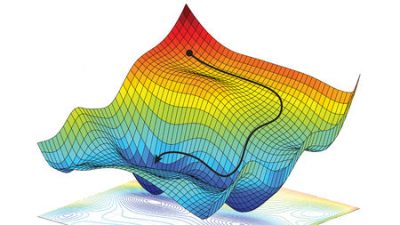

- Search Based Software Testing: Software testing is one of the major targets of AI-driven methods. The testing process is a process of executing a program or application with the intent of finding software defects and bugs by using a given test suite (set of test cases). Research directions in this field aim to address the problem of how to design effective test cases to detect as maximum as possible software defects and bugs. This problem is formulated as an optimization problem: minimizing generated test cases while maximizing the detection of bugs/faults. We aim to apply search-based optimization algorithms such as EAs, GA, PSO, etc. to tackle this problem.

- Software Defect Prediction: Software maintenance is defined by the IEEE as the modification of a software product after delivery to correct faults, to improve performance or to adapt the product to a modified environment. Mining user feedback is essential for software engineers. We aim to apply ML techniques and NLP to explore user feedback in order to automatically predict software defects, including bug localization and fault localization, and to automatically repair them.

- Automated Program Repair using Machine Learning techniques: Along with the development of computer science, software applications are more and more popular in every domain in the real world. The growth makes the reliability of software programs becoming critical since issue-impacted programs may jeopardize digital assets. Unfortunately, the reliability of software could always be negatively affected by numerous problems, especially software bugs, which are pervasive in software systems. To reduce the tremendous damages caused by software bugs, developers should fix them in time. However, manual bug-fixing is notoriously tricky, tedious, and time-consuming. This project aims to create an automated program repair system based on machine learning techniques to reduce manual bug-fixings efforts. We first investigate the performance of learning-based automated program repair techniques on standard benchmarks such as Defects4J, Codeflaws, etc. Further, we analyze the results to identify the main challenges facing learning-based techniques. Finally, we proposed novel learning-based program repair techniques to address the challenges.

- Vulnerabilities Detection in Machine Learning Frameworks: Smart systems are increasingly dependent on machine learning (ML) frameworks, e.g., TensorFlow, for their feature implementation. These frameworks are built on top of many third-party libraries, which depend on many others. Simply trusting and reusing a framework poses a security risk as the framework and its direct and transitive dependencies can contain exploitable vulnerabilities. To mitigate this risk, this project will create an advanced software composition analysis solution that scans dependency hierarchies and builds new deep learning architectures to analyse code and document repository data and flag vulnerabilities. Further, the flagged vulnerabilities will be verified if it can be reached via our new directed grammar-based fuzzing solution that generates valid test cases (following predefined grammars) and drives test executions to vulnerable code. Our solution targets vulnerabilities hidden deep in ML framework dependencies, which are hard for a classic fuzzer to uncover and for framework developers to recognize as they appear in third-party code.

- Development of optimization resources solutions using Nash Equivalium and multi-objective algorithms for some applications: We will develop a multi-objective optimization model that uses the Unified Model to model game theory conflicts and resource allocation based on Nash equilibrium. We will test optimization algorithms, we use methods like: Meta-heuristic, Evolution Algorithm, Genetic Algorithm, Particle Swarm Optimization, and Ant Colony Optimization. Real-time data for problems such as resource management in software projects or freight optimization in logistics management.

- Development of AI application:

- Stock price prediction: Building machine learning, deep learning (convolutional neural network, natural language processing, long short-term memory), reinforcement learning to predict the direction of the stock price in the next day based on historical data.

Research Problems

- Test case generation: Addressing the generation of effective test cases to cover as maximum as possible testing criteria by using evolutionary algorithms such as GA, PSO, GWO, etc. Combining optimization algorithm with constraint-based local search to more effectively generate test suites.

- Requirement Mining: Using NLP techniques to examine user requirements and transform from natural language to requirement models (usecase model, activity model) then applying evolutionary algorithms to generate test cases from requirement models (from the perspective of blackbox testing).

- Requirements Selection: Prioritizing software requirements is an optimization task. The selection of software requirements is based on the project deadline as well as on the financial budget. This motivates us to study multi-objective metaheuristic algorithms to select effectively requirements during software development process.

- Automated Software Refactoring: Code smells are certain structures in the code that indicate the violation of fundamental design principles and negatively impact design quality. We aim to apply machine learning and deep learning techniques to detect code smells and suggest refactoring solutions by using evolutionary algorithms.

- Bug Localization: During the development of software products, bug tracking systems (such as Bugzilla and JIRA) are used to report and manage bugs. Bug localization refers to the task of locating the potential buggy source code files in a software project given a bug report. The major challenges of bug localization come from the lexical mismatches between natural languages which are used to describe bug reports and programming languages which are used to write source files. We aim to apply deep learning models to narrow the lexical gap as well as to improve the performance of existing bug localization models. We are also focusing on addressing the imbalanced problem in this field as the number of buggy files takes a small part of source code files for a given bug report. The common approaches are based on data-driven (e.g., oversampling, undersampling) and model-driven techniques (bootstrapping, reinforcement learning, cost-sensitive learning, ensemble learning).

- Fault Localization: While bug localization focuses more on locating buggy source files, in the fault localization problem, given the execution of test cases, an FL tool identifies the set of suspicious lines of code with their suspiciousness scores. We apply machine learning and deep learning techniques to analyze source code and detect faulty statements at the method level. We are also focusing on exploiting method features through code complexity metrics, mutation-based formula, etc. (often lead to +200 features) to evaluate the sensitivity of features as well as to examine the constraint between features to augment the performance of FL models.

- Stock Price Prediction Problems:

- Insufficient data

- Using new technologies such as transfer learning, ensemble machine learning models, data augmentation, and synthetic data

- Imbalanced data

- Resampling training samples such as over-sampling with minority class, under-sampling with majority class, or combine two methods

- Feature engineering

- Finding important information from given data

- Finding new feature to help imporve the training model performance

- Insufficient data

Team Members

Latest publications

Publications in 2025

- Nguyen Nhat Hai, Nguyen Thi Thu, Cao Minh Son. ViFin-Gen: Efficient Vietnamese Instruction Dataset Generation Pipeline for Finance Domain. 2024 International Conference on Advanced Technologies for Communications (ATC). 41-46. Hồ Chí Minh, Việt Nam. 17/10/2024

- Anh Ho , Anh M.T. Bui, Phuong T. Nguyen, Amleto Di Salle, Bach Le. EnseSmells: Deep ensemble and programming language models for automated code smells detection. Journal of Systems and Software. 06/02/2025

Publications in 2024

- Yen-Trang Dang, Thanh Le-Cong, Phuc-Thanh Nguyen, Anh M. T. Bui, Phuong T. Nguyen, Bach Le, Quyet-Thang Huynh. LEGION: Harnessing Pre-trained Language Models for GitHub Topic Recommendations with Distribution-Balance Loss. The 28th International Conference on Evaluation and Assessment in Software Engineering (EASE). 181-190. Salerno, Italy. 18/06/2024

- T. K. Lai, and I. L. Ngo. An investigation on the thermo-electrohydraulic performance of novel ECF micro-pump.. International Journal of Heat and Mass Transfer. 29/09/2024

- Nguyen Nhat Hai; Ha Huu Linh; Nguyen Trong Khanh. Visual Searching for a large-scale image database using advanced and experimental methods. Kỷ yếu Hội nghị Khoa học công nghệ Quốc gia lần thứ XV về Nghiên cứu cơ bản và ứng dụng Công nghệ thông tin (FAIR); Hà Nội, ngày 08-09/08/2024. 872-877. Học viện Công nghệ Bưu chính Viễn thông - Hà Nội. 08/08/2024

- Bui Quoc Trung, Vuong Hoang Minh, Nguyen Thi Hoai Linh, Bui Thi Mai Anh. A Novel Dynamic Programming Method for Non-Parametric Data Discretization. Intelligent Information and Database Systems - ACIIDS 2024. 111-120. UAE. 15/04/2024

- Phi Ho Truong, Ngoc Minh Pham, Duy Trung Pham, Nhat Hai Nguyen. AYO-GAN: A novel GAN-based adversarial attack on YOLO object detection models. The 13th International Symposium on Information and Communication Technology (SOICT 2024). 32. Đà Nẵng, Việt Nam. 13/12/2024

- Truong Phi Ho, Pham Duy Trung, Dang Vu Hung, Nguyen Nhat Hai. Proposed method for against adversarial images based on ResNet architecture. Journal of Science and Technology on Information Security. 69-82. 01/09/2024

- Sikandar Ali Qalati, MengMeng Jiang, Samuel Gyedu, and Emmanuel Kwaku Manu. Do Strong Innovation Capability and Environmental Turbulence Influence the Nexus Between Customer Relationship Management and Business Performance?. Business Strategy and the Environment. 02/07/2024

- T. K. Lai, K. D. Tran, and I. L. Ngo. A numerical study on the thermo-electrohydrodynamic performance of ECF micro-pumps. Sustainability and Emerging Technologies for Smart Manufacturing. 29/04/2024

- Ha An Le , Trinh Van Chien , Van Duc Nguyen†,Wan Choi. Channel Analysis and End-to-End Design for Double RIS-Aided Communication Systems with Spatial Correlation and Finite Scatterers. IEEE Conference on Global Communications (GLOBECOM). 1561-1566. 03/08/2023

- Phi-Ho Truong, Tien-Dung Nguyen, Xuan-Hung Truong, Nhat-Hai Nguyen and Duy-Trung Pham. Employing a CNN Detector to Identify AI-Generated Images and Against Attacks on AI Systems. The first IEEE International Conference on Cryptography and Information Security - VCRIS 2024. 72-78. Hà Nội, Việt Nam. 03/12/2024

- JYE Tin, WW Tan, AA Bakar, MS Mahali, FF Lothai, NF Mohammad, SSA Hassan & KF Chin. A Conceptual Design of Sustainable Solar Photovoltaic (PV) Powered Corridor Lighting System with IoT Application. ICREEM 2022. 09/03/2024

- Duc Anh Le, Anh M.T. Bui, Phuong T. Nguyen, Davide Di Ruscio. Good things come in three: Generating SO Post Titles with Pre-Trained Models, Self Improvement and Post Ranking. The 18th ACM/IEEE International Symposium on Empirical Software Engineering and Measurement. 14-26. Spain. 20/10/2024

- Sikandar Ali Qalati, Domitilla Magni, and Faiza Siddiqui. Senior Management's Sustainability Commitment and Environmental Performance: Revealing the Role of Green Human Resource Management Practices.. Business Strategy and the Environment. 02/08/2024

- Hoang-Anh Dang; Van-Dung Dao; Chi-Dung Dang; Nhat Hai Nguyen; Anh Hoang. Identifying Abnormal Energy Consumption Patterns in Industrial Settings: Application of Local Outlier Factor Algorithm for a Processing Factory in Vietnam. 2023 Asia Meeting on Environment and Electrical Engineering (EEE-AM). 1-5. 03/12/2023

- T. K. Lai, and I. L. Ngo. A new design and optimization of VD-ECF micro-pump: Advancements in electrohydraulic performance. Physics of Fluids. 29/07/2024

- T. K. Lai, and I. L. Ngo. An investigation on the electrohydraulic performance of novel ECF micro-pump with NACAshaped electrodes. Theoretical and Computational Fluid Dynamics. 29/02/2024

- Nguyen Nhat Hai, Nguyen Thu, Cao Minh Son, Nguyen Trong Khanh. Impact of Tokenizer in Pretrained Language Models for Finance Domain. Kỷ yếu Hội nghị Khoa học công nghệ Quốc gia lần thứ XV về Nghiên cứu cơ bản và ứng dụng Công nghệ thông tin (FAIR); Hà Nội, ngày 08-09/08/2024. 388-392. Học viện Công nghệ Bưu chính Viễn thông - Hà Nội. 08/08/2024

- Nguyễn Đức Lộc, Bùi Thị Mai Anh. Leveraging LSTM and Pre-trained Models for Effective Summarization of Stack Overflow Posts. The 40th International Conference on Software Maintenance and Evolution (ICSME 2024). 618-623. USA. 06/10/2024

- Thi-Mai-Anh Bui, Van-Tri Do, Minh Vu Le, Quoc-Trung Bui. Dynamic Difficulty Coefficient in Search-Based Software Testing: Targeting to Hard Branch Coverage. Genetic and Evolutionary Computation Conference - GECCO 2024. 791-794. Melbourne. 14/07/2024

Publications in 2023

- Nguyễn Đức Ca, Phan Thị Thu, Hoàng Thị Minh Anh, Phạm Ngọc Dương, Nguyễn Hoàng Giang, Nguyễn Lệ Hằng. Nâng cao hiệu quả quản trị đại học trong bối cảnh đổi mới giáo dục tại Việt Nam. Tạp chí khoa học giáo dục Việt Nam. 14/03/2023

- Bùi Thị Mai Anh, Dương Việt Anh, Bùi Quốc Trung. A Filter Approach Based on Binary Integer Programming for Feature Selection. RIVF 2022. 677-682. Ho Chi Minh City. 20/12/2022

- Truong Phi Ho , Truong Quang Binh , Nguyen Vinh Quang , Nguyen Nhat Hai , Pham Duy Trung. ENHANCES THE ROBUSTNESS OF DEEP LEARNING MODELS USING ROBUST SPARSE PCA TO DENOISE ADVERSARIAL IMAGES. TNU Journal of Science and Technology (Tạp chí Khoa học và Công nghệ Đại học Thái Nguyên). 181 - 189. 07/12/2023

- Ha An Le, Trinh Van Chien, Van Duc Nguyen, Wan Choi. Double RIS-Assisted MIMO Systems Over Spatially Correlated Rician Fading Channels and Finite Scatterers. IEEE Transactions on Communications. 4941-4956. 13/05/2023

- Sikandar Ali Qalati, Belem Barbosa, and Blend Ibrahim. Factors influencing employees’ eco-friendly innovation capabilities and behavior: the role of green culture and employees’ motivations. Environment, Development and Sustainability. 02/10/2023

- Thanh Le-Cong, Duc-Minh Luong, Xuan Bach D. Le, David Lo, Nhat-Hoa Tran, Bui Quang-Huy and Quyet-Thang Huynh. Invalidator: Automated Patch Correctness Assessment via Semantic and Syntactic Reasoning. IEEE Transactions on Software Engineering. 3411-3429. 17/03/2023

- Hồ Anh, Bùi Thị Mai Anh, Phương Nguyễn, Amleto Di Salle. Fusion of deep convolutional and LSTM recurrent neural networks for automated detection of code smells. EASE 2023. 229–234. Finland. 14/06/2023

- Bùi Thị Mai Anh, Đỗ Văn Trị, Bùi Quốc Trung. A Novel Fitness Function for Automated Software Test Case Generation Based on Nested Constraint Hardness. GECCO 2023. 11-14. Lisbon, Portugal. 15/07/2023

- Nguyễn Viết Chính, Bùi Thị Mai Anh, Nguyễn Mạnh Cường, Phạm Quang Dũng, Bùi Quốc Trung. Metaheuristic for a soft-rectangle packing problem with guillotine constraints. SOICT 2023. 715-722. Ho Chi Minh City. 07/12/2023

- Duy Trung Pham; Cong Thanh Nguyen; Phi Ho Truong; Nhat Hai Nguyen. Automated generation of adaptive perturbed images based on GAN for motivated adversaries on deep learning models: Automated generation of adaptive perturbed images based on GAN. SOICT 2023. 808–815. TP Hồ Chí Minh. 07/12/2023

- Huỳnh Quyết Thắng, Trương Công Tuấn, Nguyễn Thị Phương Dung. Thực hiện Nghị quyết 35-NQ/TW của Bộ Chính trị về bảo vệ nền tảng tư tưởng của Đảng, đấu tranh phản bác các quan điểm sai trái, thù địch trong tình hình hiện nay: Thực trạng, kinh nghiệm và giải pháp. Hội thảo Khoa học Quốc gia "Thực hiện Nghị quyết số 35-NQ/TW của Bộ Chính trị về bảo vệ nền tảng tư tưởng của Đảng, đấu tranh phản bác quan điểm sai trái, thù địch trong tình hình hiện nay: Thực trạng, kinh nghiệm, giải pháp. 69-72. Học viện Báo chí và tuyên truyền. 14/04/2023

- Cuong Tran Hung; Tung Nguyen Nhat; Phuong Pham Viet; Phuc Nguyen Xuan; Thuan Nguyen Quang; Nguyen Nhat Hai. Enhancing Control of a 21-Level Modular Multilevel Converter through Grid-Based Model Predictive Current Control. 2023 Asia Meeting on Environment and Electrical Engineering (EEE-AM). pp. 1-6. 13/12/2023

- Xuan Cuong Do, Hoang Dang Nguyen, Nhat Hai Nguyen, Thanh Hung Nguyen, Hieu Pham, Phi Le Nguyen. A Novel Approach for Extracting Key Information from Vietnamese Prescription Images. SoICT 2023. 539–545. TPHCM. 08/12/2023

- Sikandar Ali Qalati, Sonia Kumari, Kayhan Tajeddini, Namarta Kumari Bajaj, and Rajib Ali. Innocent devils: The varying impacts of trade, renewable energy and financial development on environmental damage: Nonlinearly exploring the disparity between developed and developing nations. Journal of Cleaner Production. 02/02/2023

- Wu Qinqin, Sikandar Ali Qalati, Rana Yassir Hussain, Hira Irshad, Kayhan Tajeddini, Faiza Siddique, Thilini Chathurika Gamage. The effects of enterprises' attention to digital economy on innovation and cost control: Evidence from A-stock market of China. Journal of Innovation & Knowledge. 02/12/2023

- Le Phuong Chi; Huynh Quyet Thang; Vu Thi Huong Giang; Ngo Tung Son; Bui Ngoc Anh. Teaching Assignment Based on NASH Equilibrium and Genetic Algorithm. IEEE Symposium on Industrial Electronics & Applications (ISIEA). 1-7. Kuala Lumpur, Malaysia. 15/07/2023

- Bùi Thị Mai Anh, Nguyễn Nhất Hải. On the Value of Code Embedding and Imbalanced Learning Approaches for Software Defect Prediction. SOICT 2023. 510-516. TP Hồ Chí Minh. 07/12/2023

- Bùi Thị Mai Anh, Nguyễn Nhất Hải. A Novel Relevance Aggregation Approach for Bug Localization. RIVF 2023. 539–545. Hanoi. 23/12/2023

- Sikandar Ali Qalati , Belem Barbosa & Shuja Iqbal. The effect of firms’ environmentally sustainable practices on economic performance. Economic. Economic Research-Ekonomska Istraživanja. 02/06/2023

- Bui Quoc Trung, Duong Viet Anh, Bui Thi Mai Anh. A Novel Meta-Heuristic Search Based on Mutual Information for Filter-Based Feature Selection. ACIIDS 2023. 207-219. 24/07/2023

- Nhat-Hoa Tran. ESpin: Analyzing Event-Driven Systems in Model Checking. Conference on Information Technology and its Applications. 368-379. Danang, Vietnam. 28/07/2023

- Nhat Tung Nguyen; Tien Phong Le; Nhat Hai Nguyen; Quang Thuan Nguyen. A Novel Approach to MPPT Photovoltaic Power Systems Using Sliding Mode Control and Iterative & Bisectional Technique. 2023 Asia Meeting on Environment and Electrical Engineering (EEE-AM). pp. 01-06. 03/11/2023